By Samuel Fletcher, with additional input from Nick Solly and Chris Downton. These are early reflections from QR_'s hands-on experimentation with applying Large Language Models to Bill of Materials validation — one of the more promising AI use cases in PDM, and one where the constraints are more interesting than they first appear.

Traditional BoM validation is either done manually by engineers who understand the product — BoM audits, build matrices, commodity quantity checks — or the hard way, by physically building the product and finding the errors as they surface. Both routes are costly in time and material waste. QR_'s recent experiments have been looking for a third way: using foundation models to perform fast, repeatable, AI-powered BoM validation at scale. What follows are some of the early learnings.

As we've tried to productionise AI tools and make them genuinely useful to industry — rather than cool product teasers and 30-second demos — we've run into a specific friction. Large Language Model (LLM) token limits are at odds with the resource-hungry, largely unconstrained coding methods we've all become used to.

"Back in the day" coding had boundaries: bandwidth restrictions, limited processing power, tight storage. Those constraints forced developers to be efficient and mindful about length and complexity. Most of them have eased or disappeared over the last fifty years. We store "Production Intent" against every part rather than "P", because the extra few kilobytes cost nothing and the human readability is worth it. But with LLMs, we're back in a world where finite token limits demand maximum efficiency again.

A short introduction to the fundamentals

Most LLMs use a neural network architecture called a transformer. Transformers are well suited to language because they can ingest vast amounts of text, spot patterns in how words and phrases relate, and then predict which words should come next.

Text is "tokenised" — chunked into meaningful units the model can process and generate. A token can represent a character, a word, a sub-word, or a larger linguistic unit depending on the method (each model does it differently), and each token is assigned a unique identifier. LLMs are trained by mapping the relationships between those numerical IDs, which encode the semantic and contextual information of the text. When given a prompt, the model suggests which tokens come next.

Each model's architecture sets a token limit: the maximum number of tokens it can process at once — effectively the maximum combined length of prompt and output.

That matters the moment you try to apply an LLM to a specific domain and industrialise it beyond a product demo. These models are trained on very general corpora, so they're less effective for domain-specific tasks "straight out of the box". And every piece of data or context you ask the model to reason over has to fit under the token limit — which, for a Bill of Materials, can mean passing in only a handful of rows of raw data. Not particularly helpful.

Historical approaches to resource-constrained development

LLMs are new and industry applications are only just emerging, but the challenges echo the early days of computing — and the earlier answers are still instructive.

If we rewind fifty years, the constraints fall loosely into two groups:

- Storage limits imposed by the media used to transport software.

- Processing and memory limits on the machine running it.

Storage options have come a long way — from 70s floppy discs where your program had to fit under 80KB, through CDs and DVDs, to today's world where hard media barely features and network bandwidth is the main distribution bound. Processing and memory have moved equally far; the Apollo Moon Lander vs modern smartphone comparison is well worn for good reason.

Picture yourself trying to fit a 120KB program onto an 80KB floppy disk. How would you have done it? The answers split into three broad strategies:

- Optimisation — Find efficiencies in how you deliver the features. Every character counts. Abstracting into reusable functions is the textbook example.

- Approximation — Sacrifice accuracy for fuzziness where you know it's acceptable. The video game OpenArena, for instance, used an approximation to calculate the reciprocal of the square root of a 32-bit float for its lighting and reflection maths. The result was no more than 0.175% off the true value — close enough to keep the game running at speed.

- Ruthless cuts — Once every last character was squeezed out, drop features you didn't really need. Knowingly sacrifice functionality.

What are the token-limit equivalents of those answers?

- Optimisation — There's a spectrum here, from crude to clever. At the crude end, you can truncate or randomly drop text. More elegantly, contextual compression rewrites the supporting information for a query using the context of that query, so only the most relevant material reaches the model (LangChain has built-in methods for this). "One-shot prompts" — where history is saved as context and you aim for a perfect response first time — cut down on round-trip overhead.

- Approximation — You can ask the LLM itself to summarise the current state and context before starting a new set of queries, accepting a degree of information loss. Text can be compressed with techniques like Abbreviated Semantic Encoding to bring the token count down further.

- Ruthless cuts — Sacrificing functionality is the last thing you want to do, but you can be more selective about what makes it into the model's context. Retrieval Augmented Generation (RAG) is the key technique here: rather than throwing everything at the model, RAG retrieves only the most relevant data and provides that as context. More sophisticated chains let the model refine its own retrieval criteria over multiple iterations, building up to the minimum context it needs to answer the original query.

Moore's law and tokens?

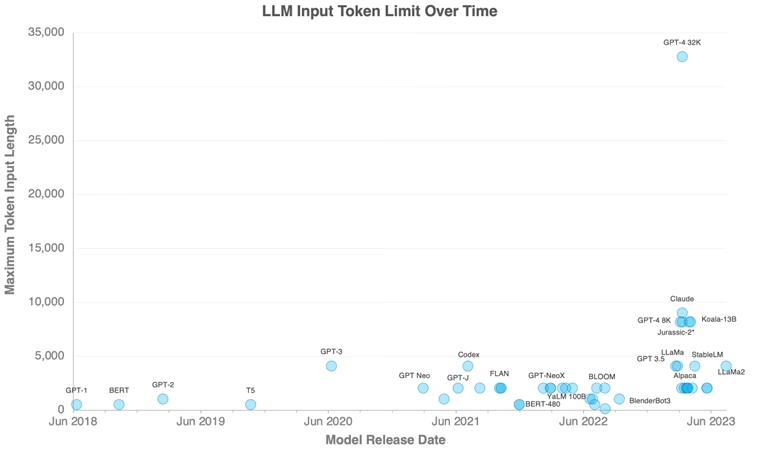

Is this a persistent constraint or a temporary one we'll engineer past? Plotting LLM input token limits over the last five years shows a gradual increase — from GPT-1's tentative 512 tokens, to the 4,096-token mainstream of 2022–23, with the first moves beyond that now appearing in the shape of Claude and GPT-4 8K. But those are the outliers, not the norm. We should expect to be working inside today's envelope for a while yet.

Worth noting: relief on query size will bring its own problems. The energy cost of an LLM query is already estimated to be 4–5x that of a traditional search engine query (per Martin Bouchard at QScale), and that's likely to scale faster as query sizes grow. Bigger isn't always better either — a recent Stanford paper found LLMs perform better when the relevant information sits at the beginning or end of the input context, not buried in the middle. There's a lot more to learn about how to shape queries before we optimise for raw capacity.

The conclusion: LLMs' strength as generalist tools is proving harder to replicate in highly specific industrial contexts, and token limits are part of the reason. But constraints like these are nothing new, and revisiting how earlier ones were handled gives us useful starting points. Waiting for higher token limits is tempting, but on balance, making better use of the resources available today will produce quicker and better domain-specific solutions. The constraints just mean we need to be more thoughtful about how we get there — which, as it happens, is exactly the kind of problem QR_ is built to solve.